What is UX Heuristics Compass?

UX Heuristics Compass is a local-first usability audit toolbox for websites, app screens, prototypes, screenshots, and short task flows. It helps a reviewer find what feels confusing, hidden, inconsistent, inaccessible, cognitively heavy, or hard to recover from, then ranks the fixes that matter most.

The beta is built around a 14-part heuristic system: Nielsen Norman Group's 10 usability heuristics plus accessibility and ease of access, empathetic engagement and inclusion, customer journey and satisfaction, and UX writing.

Not sure what to download?

Pick the file that matches where you work.

Start with the host app you use most. You can install more than one format later if you want both the tool connection and the prompt-only fallback. MCP Basics (opens in a new tab).

Claude Code / Cowork Plugin

Plugin Zip. Use this if you already work in Claude Code or Claude Cowork. This bundle includes the local MCP, skill, and host guidance.

Public beta

Download and Open GuideClaude Desktop Extension

MCPB/Desktop Extension. Use this if you already have Claude Desktop installed. This connects Claude Desktop to the local UXHC tools.

Public beta

Download and Open GuideSkill / Prompt-Only Alternative

Use this when you want prompt guidance without the MCP tools, or when your host app cannot use local connectors yet.

Public beta

Download Skill Download Skill ZipPick Your Path

Downloads are live for beta.1. Choose the plugin for Claude Cowork or Claude Code, the MCPB/Desktop Extension for Claude Desktop, or the standalone skill when tool access is unavailable.

| I want a fast first read | Use the quick audit prompt to review a screenshot, page capture, or small task flow. |

| I need the full checklist | Use the full audit prompt for scored 0-4 checklist ratings and a UX Health Snapshot. |

| I want the AI to ask questions | Use the advanced audit prompt with human-loop questions for context, calibration, and report readiness. |

| I want multiple perspectives | Use the multi-agent prompt for general UX, accessibility, and UX-writing perspectives. |

| I am testing implementation details | Use the implementation-aware prompt with screenshots, source notes, rendered previews, or browser observations. |

Your First Prompt

Copy a prompt into Claude, Codex, or another host AI. If native question UI is available, the host should use it for the context questions instead of asking in plain chat.

Skill Audit

When to use: You want a prompt-only UX review before the MCP package is installed.

MCP Quick Audit

When to use: You have UXHC installed and want a compact audit.

Full Checklist Audit

When to use: You want scored findings across the active heuristic checklist.

Advanced Human-Loop Audit

When to use: You want context, calibration, and report-readiness questions built into the audit.

Multi-Agent UX Panel

When to use: You want several UX perspectives without pretending there was a real user study.

UX Coaching

When to use: You want help turning findings into practical fixes.

What Is UX Heuristics Compass?

UX Heuristics Compass is a local UX review assistant for visible interfaces. It helps you evaluate a website, app screen, prototype, screenshot, or short task flow before it ships, gets assigned, or gets shown to stakeholders.

The plain-language version: it looks for places where people are likely to get confused, miss the next step, struggle to recover from an error, lose trust, or need more effort than the task should require. It turns those observations into a scored UX Health Snapshot, a short issue ledger, and a prioritized fix list.

It does not pretend to replace real usability testing. It is the faster earlier pass: a structured way to catch likely usability problems, name evidence limits, and decide what should be fixed before you spend time recruiting users or polishing a report.

How It's Built

UXHC is built as a local-first MCP with deterministic heuristic data, checklist-level scoring, source preparation, bounded URL capture, report validation, local report artifacts, human-loop guidance, and agent-audit aggregation.

The host AI owns conversation, visual interpretation, and user-facing explanation. UXHC owns the rubric structure, scoring contracts, report readiness, source boundaries, local persistence, and artifact generation so the audit does not become generic design commentary.

The public beta also includes a standalone skill and plugin packaging. The skill can coach a lighter Markdown-only review when the MCP is unavailable; the MCP adds structured source prep, validation, persistence, report generation, and multi-agent aggregation.

What's Inside

The beta includes 14 heuristic records and 204 possible desktop/mobile checklist items. The core 10 heuristics come from Nielsen Norman Group's usability heuristic framework. UXHC adds four optional general profiles for accessibility, inclusion, customer journey, and UX writing.

This is an early beta. The core audit contract is stable enough to present publicly, but some tool wording and output shapes may still change as local testing tightens the package.

The current MCP surface is listed below so host AIs and technical reviewers can understand what the package exposes.

get_started- Gives first-use guidance, recommended next steps, and the current audit workflow.

ux_heuristic_overview- Explains the UXHC rubric, boundaries, audit modes, and host responsibilities.

list_heuristics- Lists the 10 core heuristics plus the four optional profiles and their checklist counts.

prepare_ux_source- Creates a bounded source manifest from screenshots, image bundles, page notes, or limited public URL captures.

quick_scan_ux- Runs a compact UX audit when source context and ratings are available.

audit_ux_surface- Runs the fuller audit flow with checklist scoring, evidence limits, and human-loop support.

validate_ux_report_payload- Checks whether an audit payload is ready for report generation.

generate_ux_audit_report- Writes local Markdown, HTML viewer, and optional PDF report artifacts.

calibrate_ux_sensitivity- Runs severity fixtures and human-review guidance for finding quality.

calibrate_ux_severity- Compatibility alias for sensitivity calibration.

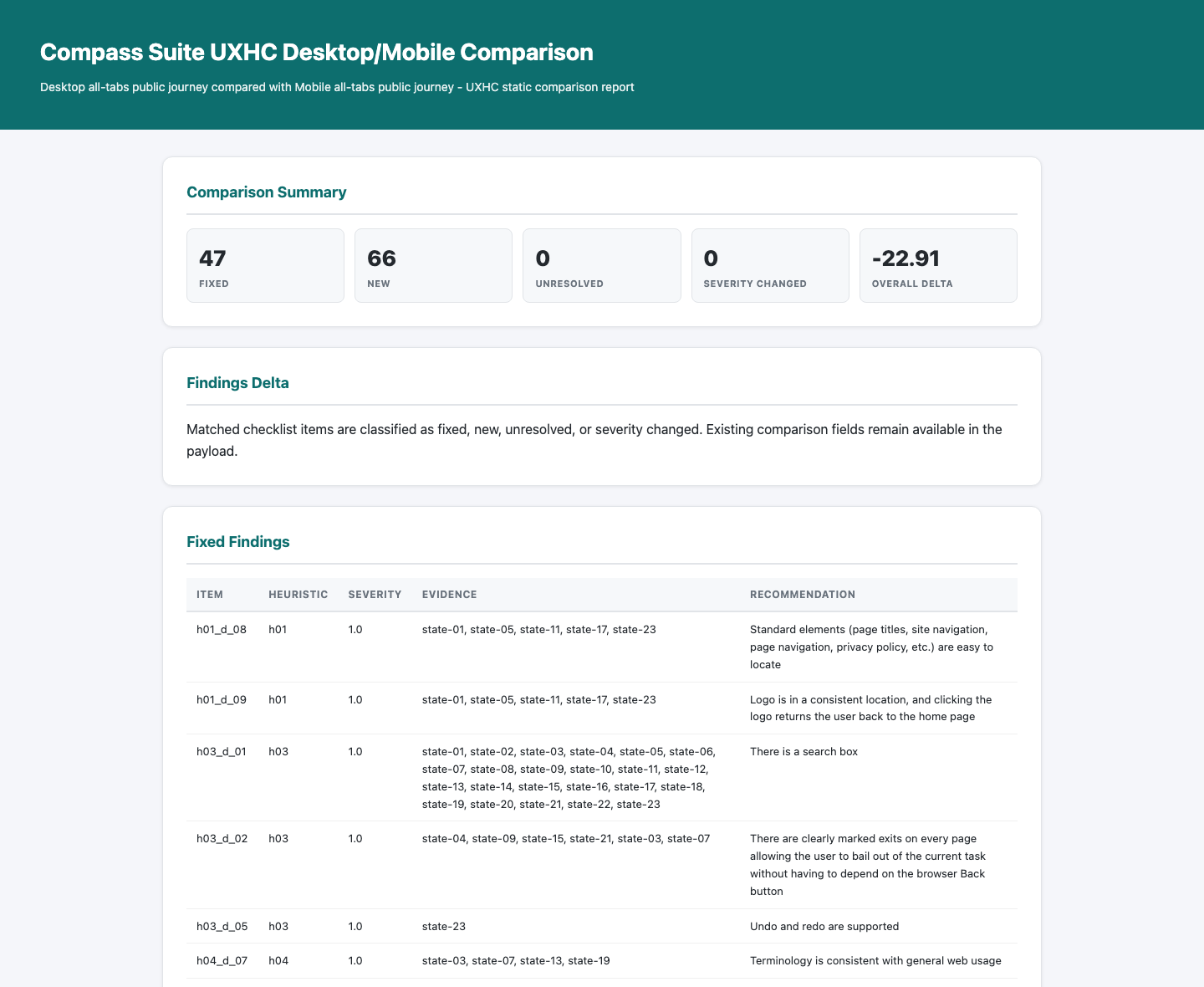

compare_ux_audits- Compares two audit payloads and labels fixed, new, unresolved, or severity-changed findings.

draft_project_addon_profile- Drafts an opt-in project-specific checklist profile without changing the core score.

save_preferences- Saves complete onboarding preferences for repeated audits.

update_preferences- Updates saved audit preferences.

run_agent_audit- Aggregates a multi-persona UX audit and flags disagreement across evaluator perspectives.

get_agent_run- Retrieves a persisted multi-agent audit run by ID.

run_usability_test- Prepares bounded audience-persona usability evidence without full-site crawling or authenticated flows.

stress_test_heuristic_finding- Reviews one finding for UX fit, evidence limits, false-positive risk, and the smallest useful fix.

How To Use It

Start with a bounded source: one screenshot, one app screen, a small page capture, or a short named task flow. Tell the AI what the user is trying to do, what platform matters, and whether you want the optional profiles included. UXHC works best when the evidence is specific and the task is clear.

For a fast pass, use the quick audit prompt and ask for the top findings only. For a fuller review, use the checklist audit so the AI has to separate observed evidence from inference and return scored issues. For more sensitive or ambiguous work, use the advanced human-loop prompt so the host asks context and calibration questions before finalizing severity.

When you need a shareable deliverable, use the MCP flow: prepare the source, run the audit, validate the report payload, then generate the report. The Example tab shows the intended shape: a plain-language readout, a scorecard, prioritized fixes, and evidence limits rather than a wall of generic advice.

Install Guide

Choose the install path for the Claude surface you use. UXHC beta.1 packages are available now; manual target-client install QA is still tracked separately from package build verification.

Claude Code / Cowork Plugin

- Download

ux-heuristic-compass-plugin.zip. - Install it through the current Claude Cowork or Claude Code plugin flow.

- Run

get_startedor use the first prompt on the Get Started tab.

Claude Desktop Extension

- Download

ux-heuristic-compass-0.1.0.mcpb. - Open Claude Desktop settings and find Extensions.

- Install the MCPB file and approve the standard local-extension warning.

- Ask Claude to use UX Heuristics Compass for a quick audit.

Skill File or Skill Zip

- Download

ux-heuristic-compass.skillorskill.zip. - Add it through your host app's skill import flow.

- Use the Skill Audit prompt on the Get Started tab.

Source Install

Technical users can download ux-heuristic-compass-source.zip, inspect the source, run the self-test, and connect the MCP manually. This path is useful for review, development, or local troubleshooting.

If Install Fails

Use the diagnostic prompt in the Advanced tab before editing files manually. Most failed installs come from an unavailable package, a host app that has not reloaded the MCP, or a path that needs local-machine adjustment.

Example

See what a finished UX Heuristics Compass audit can look like. This example is fully public — nothing has been redacted.

Finished Audit Snapshot

This public-safe example shows UX Heuristics Compass turning bounded page evidence into a scored heuristic audit, a plain-language readout, prioritized findings, evidence limits, and recommended next validation steps.

Example output B- / 68.5%

What this example shows

This example shows how UX Heuristics Compass turns a provided source into a ready-to-read audit report. It does more than list UI issues. It identifies progress loss as the highest-priority problem because it breaks the core learning loop, then separates what was observed from what still needs validation.

- UX Health Snapshot: gives the grade, percentage score, plain-language read, and the highest-priority before-use warning.

- Scorecard: shows how each heuristic performed and where the biggest friction concentrates.

- Critical findings: names the specific interface failures that most affect the learner journey.

- Evidence limits: explains what was observed directly and where a fuller accessibility or usability pass is still needed.

- Prioritized fixes: turns findings into a practical repair sequence instead of a loose critique list.

- Next validation: points toward the follow-up checks that would make the audit stronger.

| Top finding | Progress is lost for all users, including logged-in accounts. |

| Primary themes | Progress persistence, lesson loading feedback, visible exit path, and screen-reader loading state. |

| Evidence limit | The audit is bounded to selected pages and is not a full WCAG compliance test. |

Output Snapshot

These snapshots show the report-style output: a scorecard with the overall grade, then a compact plain-language read that turns the audit into a usable decision point.

Claude Conversation Snapshot

These are Claude Code conversation screenshots from the audit flow. Claude chat and Claude for Work can produce similar results, with some variation based on the model, host surface, available tools, and evidence supplied.

Bonus: Human + AI Output

This bonus example shows a follow-up synthesis where Claude combines UXHC results with a human evaluation panel and another AI evaluation. This is a demonstration of what Claude can do with the audit output afterward; it is not an inherent MCP report function at this stage.

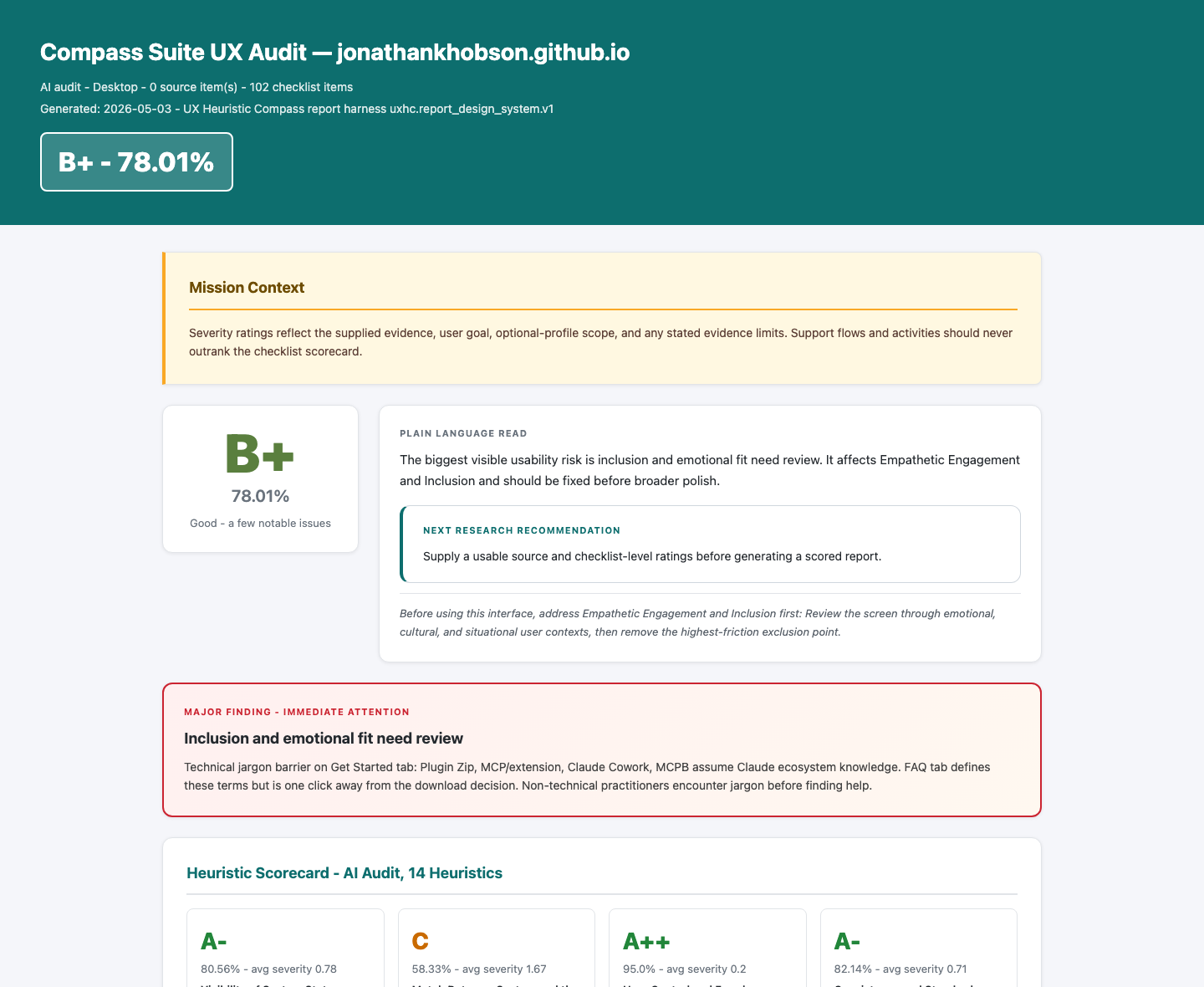

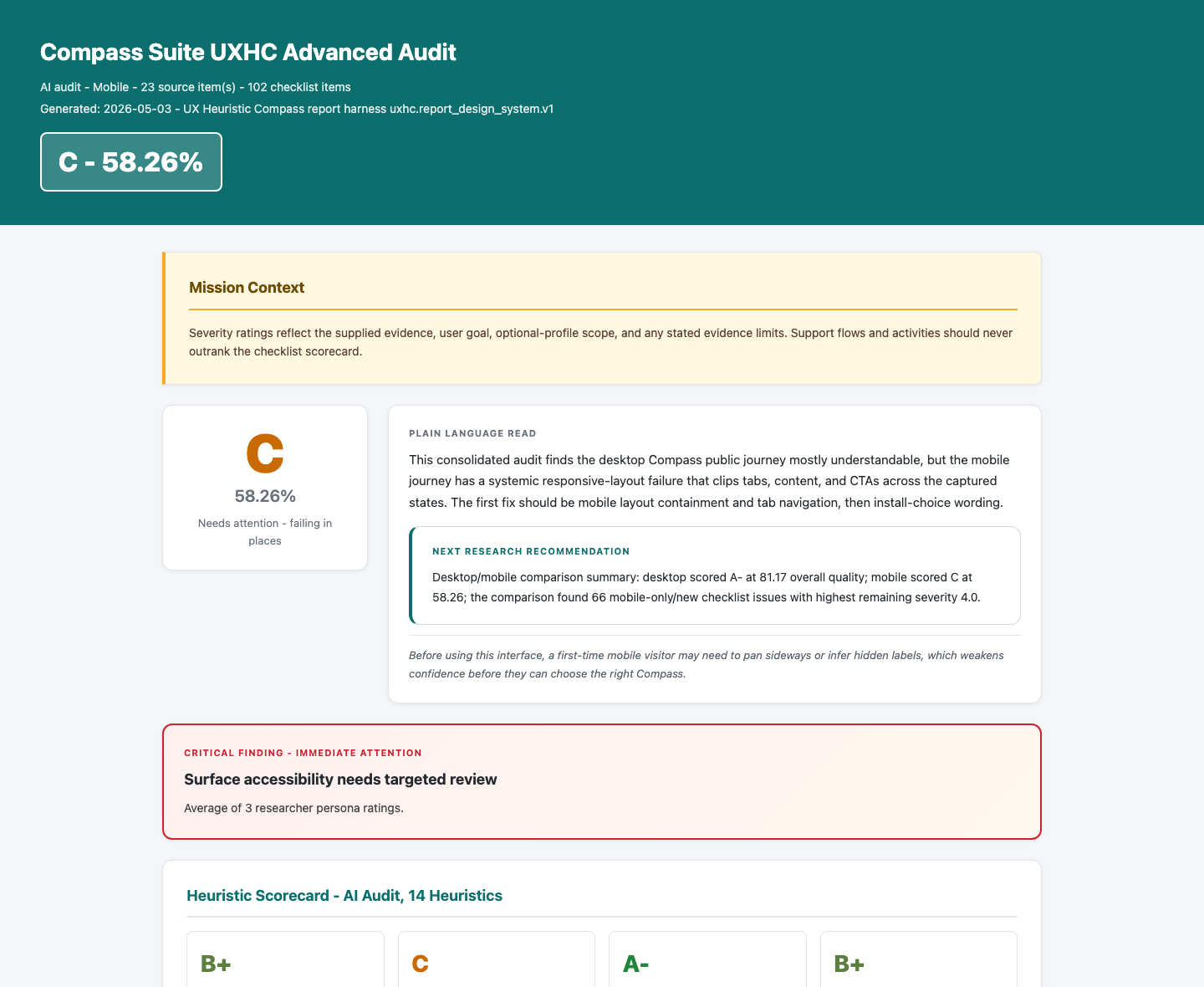

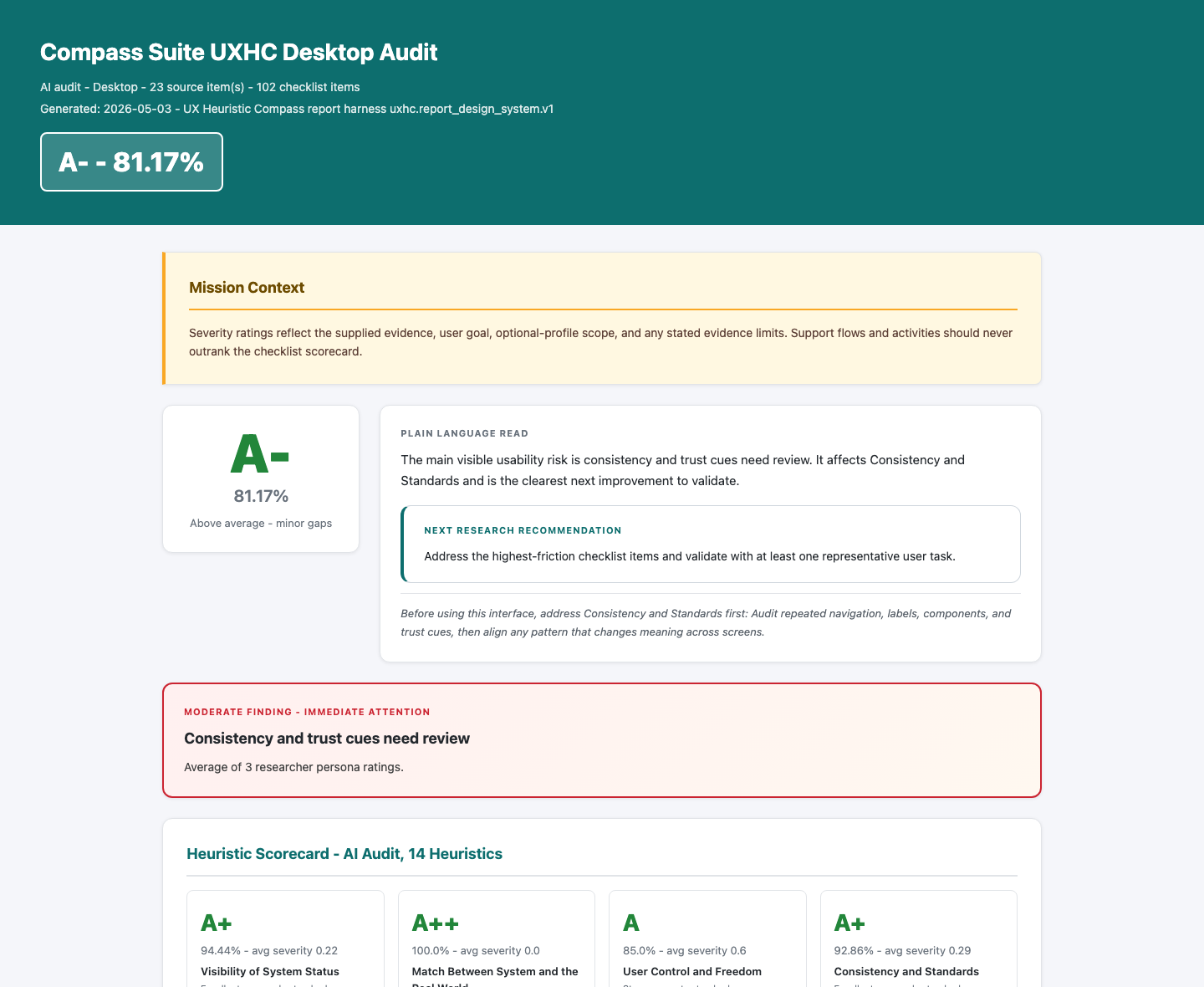

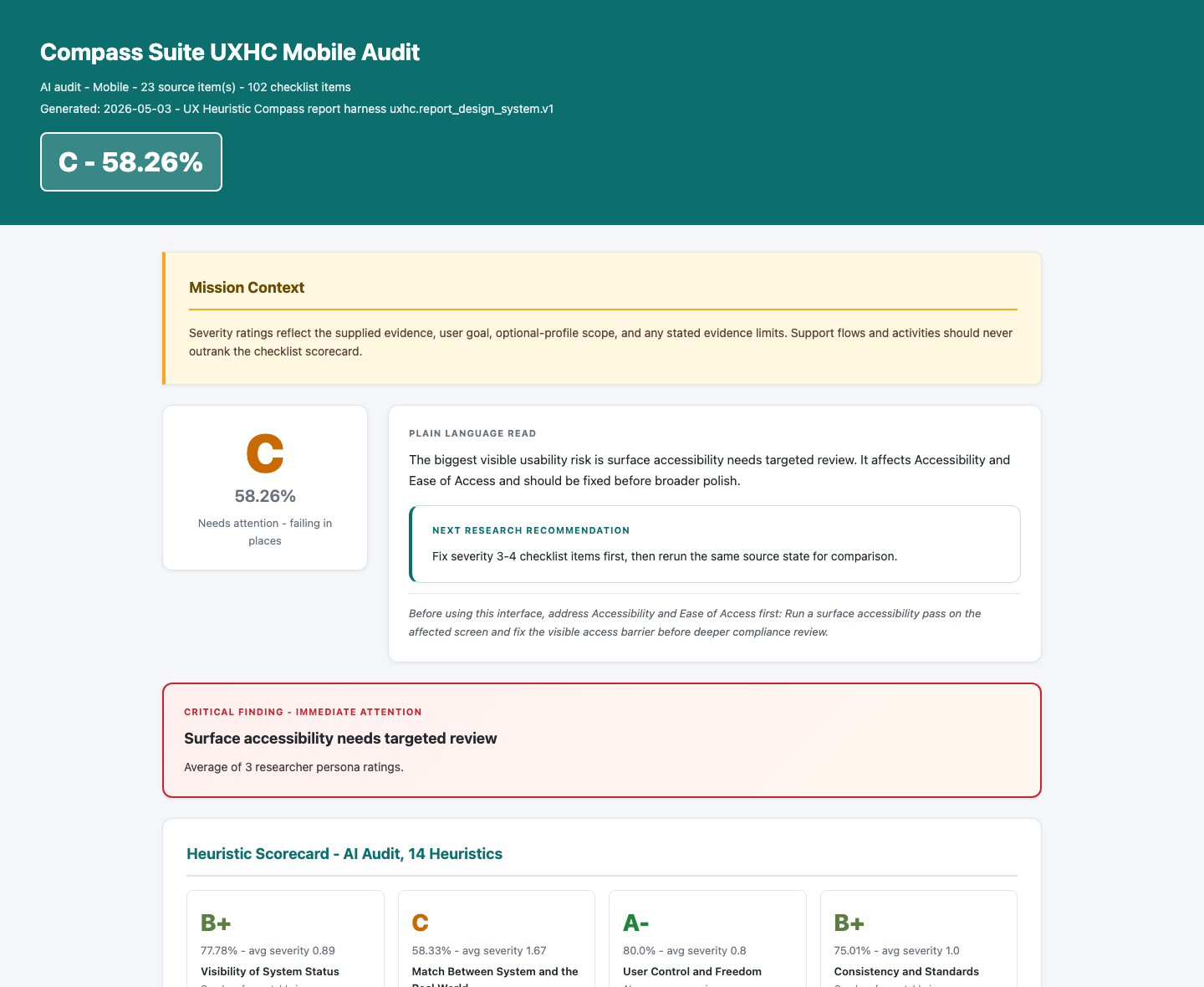

Finished Audit Snapshot

This example shows UX Heuristics Compass evaluating the public Compass Suite surface across multiple audit sources. It includes a merged Claude + Codex read, separate desktop and mobile reports, and a desktop/mobile comparison so the reader can see where the experience holds up and where mobile stress changes the priority order.

Example output 3-source suite audit

What this example shows

This example shows how UXHC can move from a single product-page audit into a cross-surface public-site review. It is useful for seeing how separate evaluations can be compared, merged, and turned into a remediation roadmap.

- Multi-source scoring: compares Claude and Codex reads instead of treating one model output as final.

- Desktop/mobile differences: shows how the same site can score well on desktop while mobile exposes higher-friction issues.

- Remediation triage: turns findings into a high-impact, low-effort patch sequence.

- Heuristic coverage: keeps the 14-heuristic system visible across accessibility, writing, journey, recovery, and visual hierarchy.

- Agreement and disagreement: makes it easier to see which issues repeat across evaluators and which need human judgment.

- Public-surface closeout: connects the audit to a concrete Pages hardening pass rather than leaving it as critique.

Output Snapshot

These snapshots are captured directly from the HTML reports. They are meant to show the shape of the finished artifacts before you open the full examples.

Browse the HTML Reports

Use the buttons below to preview each report inside this tab. The new-tab links above remain the most reliable way to read or share a full report.

Frequently Asked Questions

Quick answers about UXHC, heuristic evaluation, MCPs, and install expectations.

What does UX Heuristics Compass actually do?

It helps an AI assistant review a visible interface with a structured UX checklist instead of giving generic design commentary. It returns scoped findings, severity, evidence limits, and fixes to prioritize.

What are UX, usability, and user experience?

UX means user experience: what it feels like for someone to use a product, service, website, or app. Usability is the part of UX focused on whether people can understand, navigate, complete tasks, recover from mistakes, and do what they came to do without unnecessary friction.

What is a heuristic evaluation, and what are Nielsen's heuristics?

A heuristic evaluation is a structured expert review. Instead of waiting for a full research cycle, a reviewer checks an interface against known usability principles and flags likely problems. Nielsen Norman Group's 10 usability heuristics are one of the most widely used sets of principles for this kind of review. UXHC uses those 10 as the core, then adds four optional profiles: accessibility and ease of access, empathetic engagement and inclusion, customer journey and satisfaction, and UX writing.

Can this audit code?

It can use implementation evidence when a host AI provides it, such as rendered previews, source notes, browser observations, or external audit results. UXHC does not replace code review or accessibility testing, and implementation evidence must still be mapped back to visible usability findings.

What is an MCP connector or extension?

MCP means Model Context Protocol. In this context, Claude may call the local tool connection an MCP, connector, or extension. UXHC's extension is the MCP connector: it gives Claude structured local tools for preparing sources, running audits, validating reports, and generating artifacts. Learn more about MCPs.

What is the difference between the plugin, extension, and skill?

Plugin zip: the Claude Cowork or Claude Code bundle. Extension file: the Claude Desktop MCP connector. Skill: the prompt-only alternative a host can read without the MCP. You can install more than one when the release assets are available.

Does this work with Codex?

Yes. Codex can work with local MCPs, source installs, and prompt guidance. This beta.1 landing page does not provide a one-click Codex install path yet; technical Codex users should wait for the source package and AI-assisted install prompt.

Is UX Heuristics Compass safe to install?

Downloading anything from the internet carries real risk: malicious code, data collection, hidden scripts. UXHC is designed to reduce that surface area: it runs locally, uses bounded files, and does not need an external service for core workflows. No third-party tool is zero risk, so review the source or ask a trusted technical person before installing. Claude's warning is the standard prompt it shows for local third-party extensions, not a flag specific to this tool. Learn more about MCP risks.

Do I need a GitHub account to download?

No. Once the release assets are published, the buttons will go directly to the files. GitHub is only where the files are hosted.

Advanced

This section is for IT reviewers, security-conscious users, and anyone troubleshooting installation.

Download source files

Use this zip if you want to inspect, modify, or rebuild UX Heuristics Compass yourself. It excludes private state, generated release files, env files, caches, and machine-specific artifacts.

Advanced: Verify Download

These SHA-256 checksums help technical reviewers confirm that downloaded files match this release.

ux-heuristic-compass-plugin.zip | f54afb8be72d067c2b315dfff444300b5c27fe30b4447334cb9829fea33ea397 |

ux-heuristic-compass-0.1.0.mcpb | 06fa89dcab73aea50297510c7568ee5b5ef205daf25e69b3815bf4f776f1b9a4 |

ux-heuristic-compass.skill | 2ce183910446ac132e3a60f94bb7fa722d0719990dc2594e19b988775e2fa450 |

skill.zip | 13ab2212ef345df6bfb4d4c21ed080e75175ccd8b73d12c83f38197f735f03e4 |

ux-heuristic-compass-source.zip | f55a9795f21e540e94eb002cf85ec40614303e49b45ab2ab27abd2c02e94efaa |

checksums.txt | SHA-256 manifest |

AI-Assisted Install Prompt

Use this prompt when you want a careful AI assistant to help inspect and install the package.